Large scale multiplayer games are outgrowing the wider gaming market (MMORPG 7.7%CAGR vs Gaming 6.2% CAGR) so it’s no wonder game studios and developers are racing to create next gen MMORPG’s. They’re looking to create lifelike chaotic worlds that won’t disappoint players with invisible walls, loading zones, sharding worlds, and crashes at world events. Yet to achieve this kind of immersion and scale requires a dedicated, expert team to design, build and support the necessary infrastructure. Capacity planning, deployment automation, orchestration — these are exactly the things you don’t want to sink time into as a game developer — they constrain your imagination. With finite budgets, you are left making a compromise: either scale back the immersion or reduce the scale of the world.

In truth, these compromises are due to the complexity behind hosting these huge worlds. Masses of software abstractions designed to stitch these vast worlds together require huge teams with specialist expertise. Instead of improving the situation they have the opposite effect, the hosted world becomes an opaque mesh of distributed computing complexity — that grand gaming vision you had now needs to be engineered to the nth degree before you can even get started. Orders of magnitude in performance and efficiency is lost to these abstractions which means that truly vibrant, dynamic and complex game worlds become prohibitively expensive to operate and maintain. In short, these layered abstractions have moved us further away from from the gamer experience.

Perhaps it is time for a clean slate for MMORPGs — a new paradigm in spatial computing. It’s widely understand that octrees offer significant computational benefit so why aren’t open

worlds taking advantage of this from the ground up? Why do game developers insist on sharding the world across multiple servers and create loading zones around player environments? The current stack of abstractions constrains scale, control and reliability, and it is beginning to show. At the same time, a true distributed dynamic octree is hard to achieve on large clusters of machines. There is simply not any programming model atop the cloud that lets you treat it as if it were a single giant machine and build up data structures in the same familiar way game and engine programmers already build on singular machines.

Elastic computing has long been the nirvana to adaptive scaling but the software stack complicates what should be an elegant solution. What we want is this “living”, evolving data structure that knows its extent and limits and can dynamically grow as the demand spikes. What we want is for this data structure to expand to and preemptively instantiate another server when the world is under high load, thus reducing the pressure. Instead, what we get is another server spinning up, then are left waiting for a mountain of applications to load, configure, and allocate to even begin any useful computation. In the end, we might be able to use this server to compute part of the game state.

For the developer, even the most modest MMORPG presents many difficulties. How will the world be split onto servers? Where will loading zones be placed to minimise disruption of the immersion? How will the player base itself be permanently divided, by invisible walls, into multiple “realms” that run on their own server clusters? What game features will be restricted or dropped because they are technically infeasible? All of this, before you can even design the game. And when you’re over this hurdle, now you need to carefully knit together the tooling and manage the orchestration and cloud services to try to make a seamless gameworld. Finally, the game might be working, you’re ready to playtest it at some kind of scale, and … something falls over. But where? This leads into the lengthy root cause analysis process, all while the gamers are left with a broken experience. Gamers are fickle creatures. They’ll happily take to Steam and write scathing negative reviews that have many a time commercially ruined a studio at launch because the game devs planned for a lower capacity and left players with a bad experience. The studios themselves become victims of their own success. The examples are many: Age of Conan, SimCity, Diablo 3, No Man’s Sky — all games that suffered from greater-than-anticipated player numbers.

Surely it doesn’t have to be this way? What if we could remove the bloat in the stack and get the perfect abstraction for elastic cloud computing nirvana? What if we designed gameworld architectures built on the optimizations of a massively distributed octree? A small, clean process model that enables previously complex architectures to be built in weeks. We would remove any extra complexity that comes with all these layers, and move dramatically closer to the hardware limits. Pushing the limits of what is even conceivable on hardware has been the overarching goal in the games industry from the first days up to today — from the earliest tic tac toe and tennis experiments on the oscilloscope to Wolfenstein3D, Crysis and beyond.

This is precisely what we’ve attempted to do using the Hadean platform … and we built a prototype in 12 weeks.

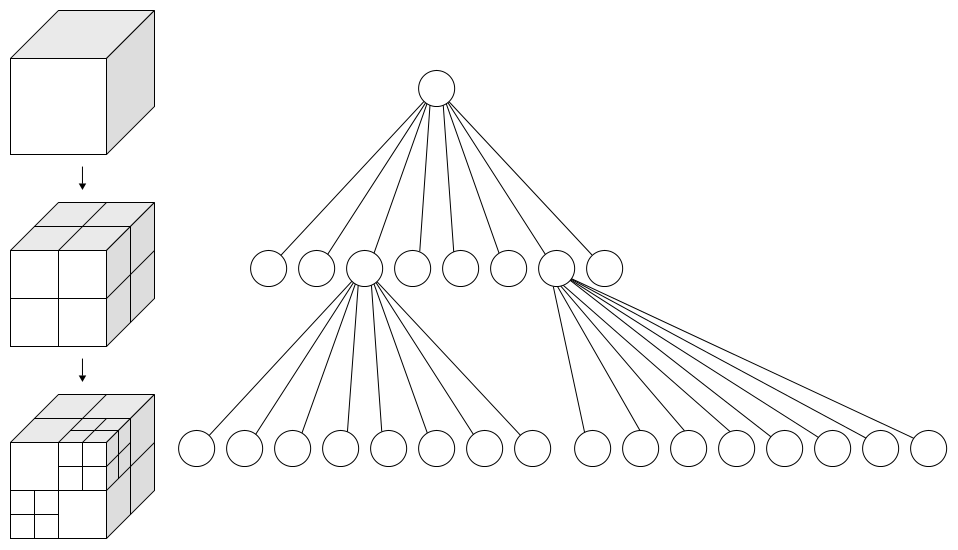

Our approach uses an octree to hierarchically subdivide space. The space covered by each leaf node is authoritatively computed by a single worker process. Leaf workers are dynamically spawned, split, merged and despawned based on instantaneous load. The tree structure adjusts naturally to the computation density of the space. Leaves communicate among themselves, sending entities and interactions locally or long-range.

To demonstrate the power of our approach, we developed a particle simulation using the Hadean octree. It handles a massive number of bodies in an O(N2) computation by closely approximating the computation density and it scales across many cores and servers adaptively,without any user intervention.

To begin with, our simulation handled 800 entities per worker at 30 ticks per second, with no communication between workers. So the simulation was very limited. The next step was to enable communication between workers, so that the simulation range could extend beyond a single cell. With this enabled, we scaled up to 256 workers on multiple machines, reaching 200k points. In the next few weeks we hope to achieve over a million points — the only limitation is bandwidth because we’re currently communicating the entire server state, across many servers, to a remote client sitting outside the cloud at “real-time” framerates of 30–60 FPS. In the real world, you’ll only be transferring a portion of the server state to any single client as the player will only occupy a region. Also, when viewing the entire server state for debug reasons you’d typically not do this at 30–60FPS. As such, the limitation is contrived but is ultimately a limit imposed by hardware — the link between the cloud and your remote connection.

https://cdn.embedly.com/widgets/media.html?src=https%3A%2F%2Fplayer.vimeo.com%2Fvideo%2F243665713&dntp=1&url=https%3A%2F%2Fplayer.vimeo.com%2Fvideo%2F243665713&image=http%3A%2F%2Fi.vimeocdn.com%2Fvideo%2F667860113_1280.jpg&key=a19fcc184b9711e1b4764040d3dc5c07&type=text%2Fhtml&schema=vimeo

Our octree implementation allows highly efficient indexing and communication. The master node that operates the octree requires only a tiny amount of bandwidth to each worker, allowing it to handle hundreds or thousands of workers simultaneously. The abstraction is such that the workers don’t need to have any knowledge of the octree, only about their entities and the neighbors they interact with. All this is supported by simple communication primitives that take care of spawning and locating new cells.

This data structure allows almost infinite scale and resolution for spatial computation. That could be a massive number of players all in the same gameworld, without any sharding or invisible walls. For example, a dense space battle with thousands of players, handled seamlessly and without time dilation.

As this is built atop Hadean’s Operating System and Platform technology, what you get is a full-blown OS underneath, not just some wrapper around an actor model and a game engine. Developers are free to use other libraries — perhaps Torch for AI and Havok for physics on each local region of space. These SDKs are all running massively-parallelly where the distribution, dynamic scale and complexity is handled by the distributed octree.

Using our octree focused design we believe the barriers to truly massive-scale MMOs have been lowered considerably and open up the potential for a new wave of immersive large-scale worlds with true complex systems dynamics and sophisticated AI hitherto unseen.

Let us know what you’re designing and the challenges you’re facing with MMO’s in the comments below.